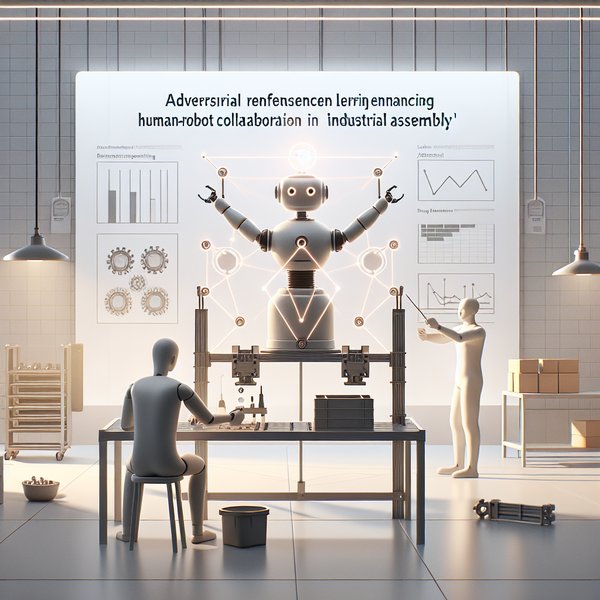

The research paper introduces an innovative method to improve the resilience of collaborative work between humans and robots in industrial assembly operations. This approach leverages Adversarial Reinforcement Learning (ARL) to enable a robot to acquire an assembly strategy that can withstand human errors. The adversary introduced in the system can embody various uncertainties and disturbances within the work environment. Through learning from adversarial interactions, the robot can enhance its performance and flexibility when faced with challenging conditions.

In the context of executing assembly task sequences, the study applies ARL. The robot functions as one of the agents and learns how to support its human counterpart during the assembly process. The agent mimicking the human role serves as the adversary, intentionally introducing errors during assembly. Additionally, the robot learns to adapt to different levels of human skill and collaboration by adjusting its behavior accordingly.

The effectiveness of the proposed method is analyzed through experiments that replicate complex assembly sequences. A comparison is made with standard methods employing traditional optimization algorithms. The findings reveal that while ARL may not surpass conventional optimization algorithms in terms of task completion time, it does ensure resilience against human errors. Furthermore, the paper delves into the implications of this approach for human-robot collaboration and proposes avenues for future research.

To connect with the contributor or access additional resources and information related to the study, visit the provided link: https://ifip.hal.science/hal-05540657. The submission date of the research was Friday, March 6, 2026, at 4:42:09 PM, with the last modification made on the same day at 4:50:40 PM. For legal matters, portals, and other inquiry details, refer to the contact resources section on the website.